Research at a Glance

- Role

- UX Research Lead - end-to-end: grant writing, recruitment, research design, analysis, stakeholder communication

- Timeline

- 1 year (grant-funded master's thesis)

- Team

- 2 designers, 3 developers, faculty advisor - I led all research decisions

- Methods

- Semi-structured interviews (30), think-aloud usability testing, A/B testing (50), thematic analysis, task-flow mapping

- Participants

- 80 international students (Farsi, Spanish, Chinese speakers) recruited through international student office

- Outcomes

- +80% satisfaction, +89% task success, redesigned AI chatbot shipped to production

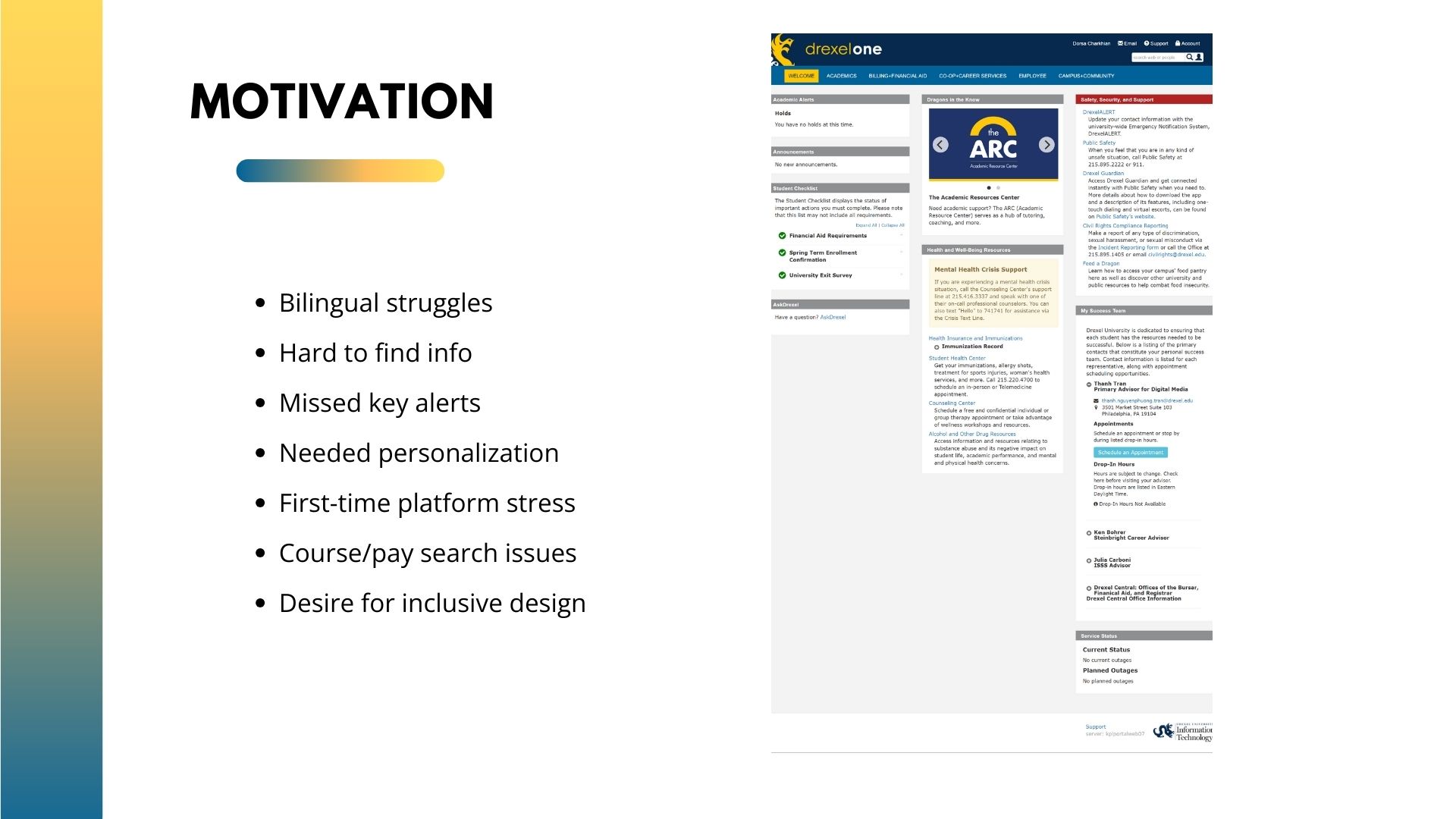

Why This Research Mattered

Drexel has over 3,000 international students. They use the same portal as everyone else - for registration, financial aid, visa documents, health insurance. But the portal wasn't built for people who think in a different language. Students were missing deadlines, calling the wrong offices, and dropping tasks halfway through.

The university wanted to "fix the language problem." I needed to figure out what the actual problem was first.

Context and Constraints

This was a grant-funded thesis project. I had a small budget, access to real students through the international student office, and a dev team of three who were building the chatbot in parallel. The constraint was real: I had to produce actionable findings fast enough for the team to actually use them during development - not after.

I also had to align with my faculty advisor, who was skeptical that AI was the right solution. Honestly, I wasn't sure either. The research had to earn that answer.

Research Questions

I framed three questions that guided everything:

- Where exactly do international students get stuck in the portal - and why?

- Does translating the interface actually improve comprehension, or does it create new problems?

- What kind of support (human, AI, structural) would most reduce confusion and task failure?

Research Plan

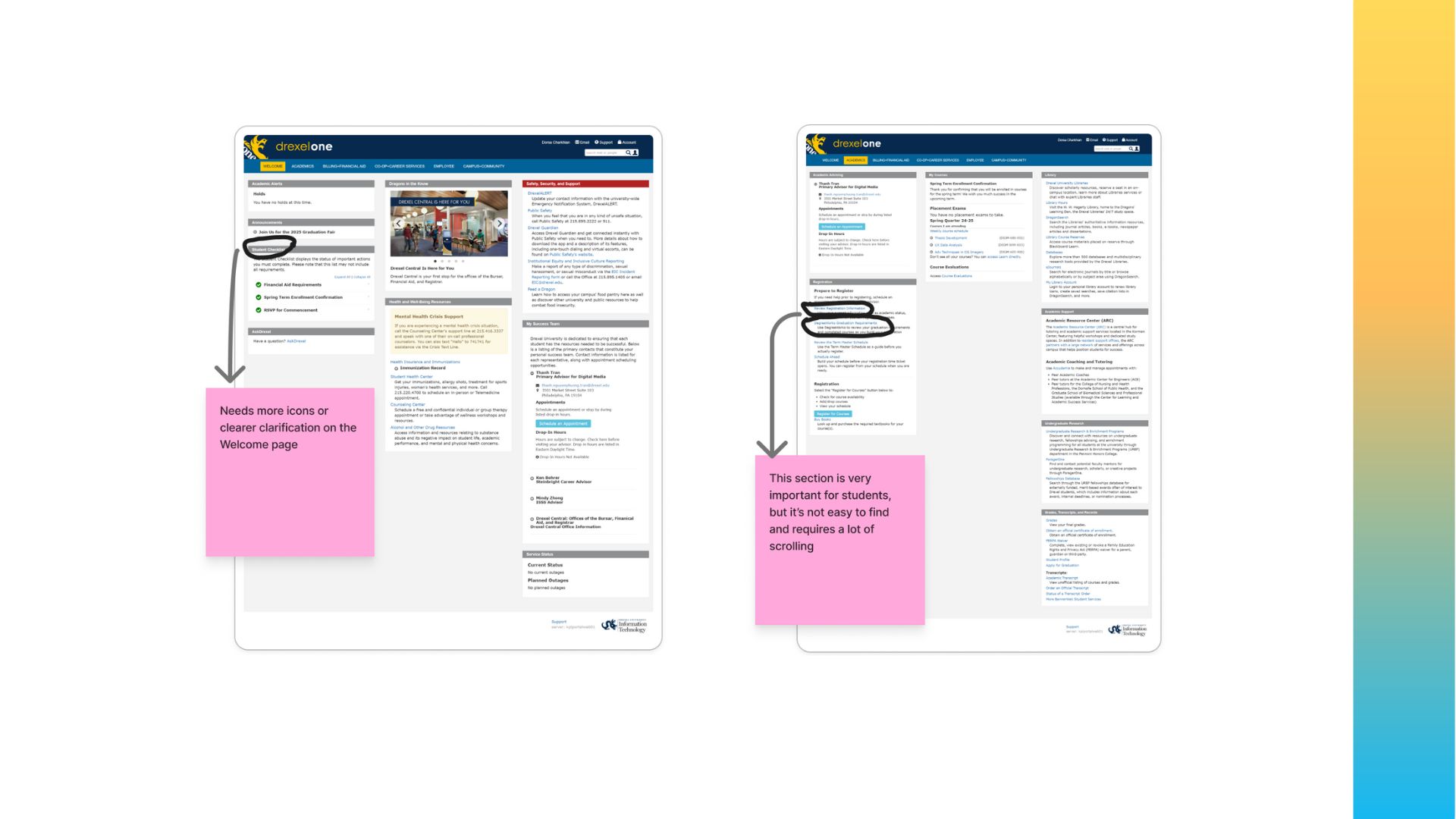

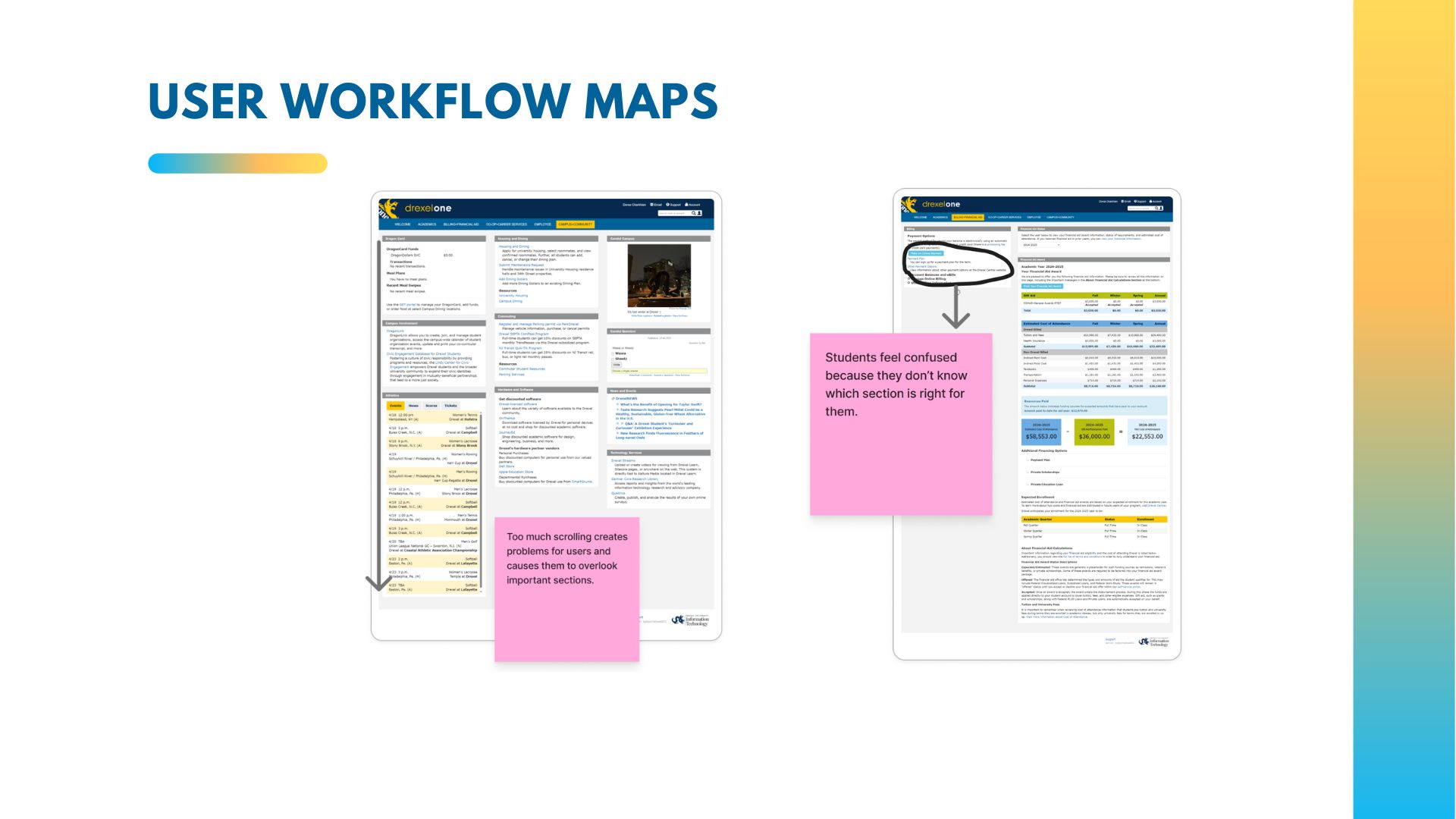

Phase 1: Discovery (months 1-4). 30 semi-structured interviews with international students. I recruited through the international student office, targeting Farsi, Spanish, and Chinese speakers specifically - the three largest non-English language groups at Drexel. Each session was 45-60 minutes: I asked students to walk through common tasks (registering for classes, checking financial aid, finding visa forms) while thinking aloud. I recorded where they paused, backtracked, or gave up.

Phase 2: Analysis (months 4-6). Thematic analysis of all 30 transcripts. I coded for confusion points, workarounds, emotional responses, and language-specific patterns. Then I mapped task flows to identify where the system failed across all three language groups - and where the failures diverged.

Phase 3: Testing (months 7-10). A/B testing with 50 additional students. I tested the original portal against our redesigned version (simplified navigation + AI chatbot). Measured task completion, time-on-task, satisfaction scores, and comprehension checks in each student's primary language.

Phase 4: Iteration (months 10-12). Two rounds of usability testing on the chatbot prototype. Each round led to specific design changes based on where students still struggled.

Stakeholder Alignment

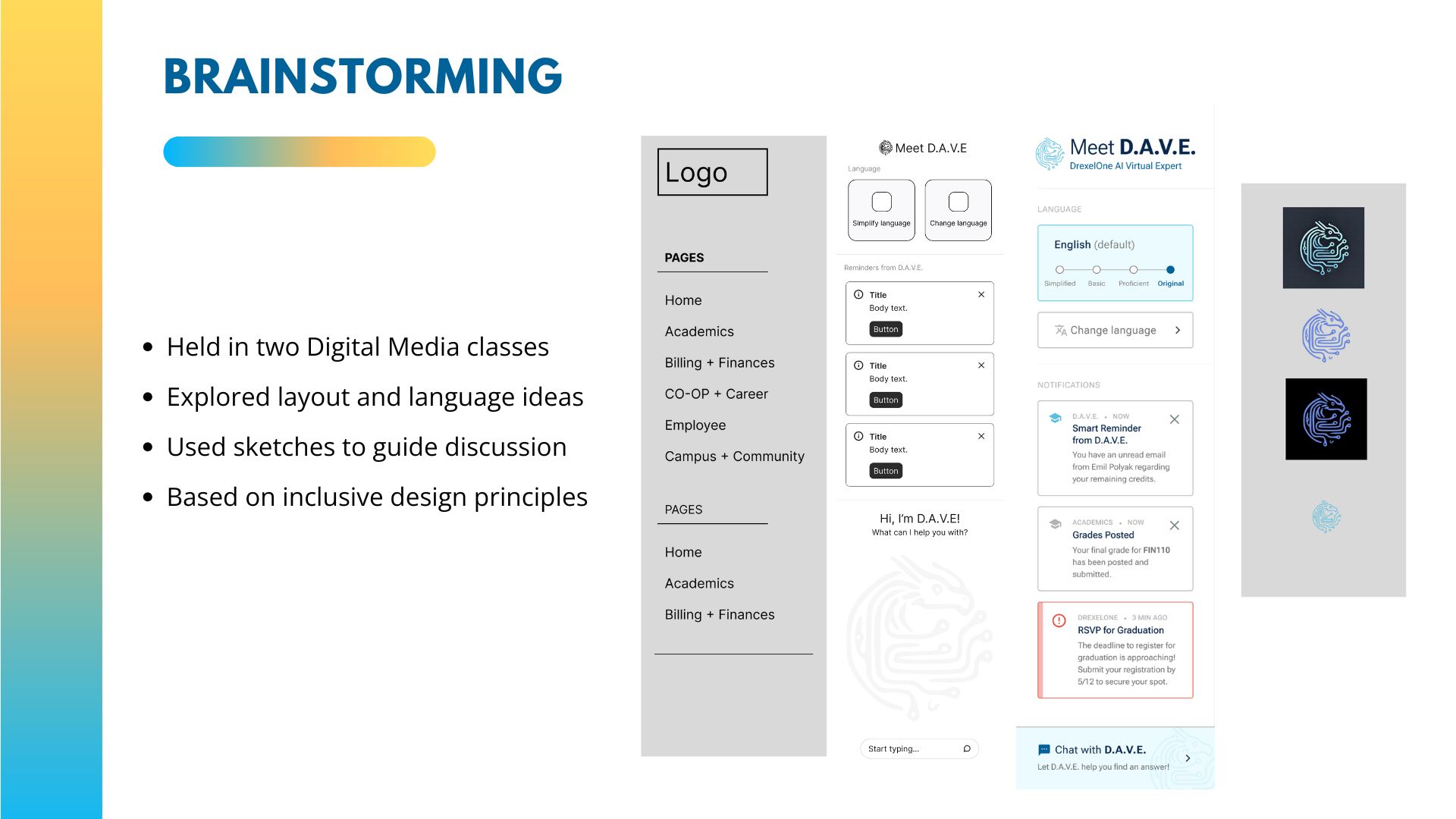

The dev team assumed translation was the answer. My advisor was skeptical about AI. The international student office wanted quick fixes. I had three audiences with three different expectations.

I shared findings every two weeks - not polished reports, but raw patterns with student quotes. When the team saw a student say "I translated this to Farsi and it made less sense," that did more than any slide deck. The research earned credibility by being transparent, not by being perfect.

Key Findings

Finding 1: Translation made things worse. Word-for-word translation of institutional terms (e.g., "bursar," "registrar," "holds") created false confidence. Students thought they understood, but the translated terms didn't carry the same institutional meaning. Farsi speakers consistently misinterpreted "academic hold" as a positive status.

Finding 2: The problem wasn't language - it was structure. All three language groups got stuck at the same points: deep navigation hierarchies, ambiguous labels, and tasks split across multiple pages. Native English speakers struggled at these points too - just less visibly.

Finding 3: Students wanted explanation, not translation. When I asked "what would help?" the answer was consistent: "explain what this means and what I should do." Not in their language - in plain terms. They wanted context, not word-swapping.

Finding 4: Trust depended on timing. Students trusted the AI chatbot most when it appeared at the moment of confusion - not as a general feature. Proactive, contextual help outperformed a standalone chatbot interface by a wide margin in satisfaction scores.

Recommendations and Design Decisions

Based on these findings, I recommended three changes:

- Kill the translation feature. Replace it with contextual explanations - plain-language definitions that appear when students hover or click on institutional terms.

- Build D.A.V.E. as a contextual AI assistant. Not a chatbot in the corner - an AI that detects confusion (long pauses, repeated navigation) and offers help in context. "It looks like you're trying to check your financial aid. Here's what each status means."

- Flatten the navigation. Reduce the portal from 5 levels deep to 3. Rename labels using student language, not administrative language.

The team pushed back on cutting translation. I showed them the data: students who used the translated version had lower task completion rates than those who used the English version with explanations. That ended the debate.

Impact

Reflection

The biggest lesson: assumptions about users are most dangerous when they feel obvious. "Of course translation helps international students" felt so logical that nobody questioned it. It took 30 interviews to prove it wrong. AI can help people feel less lost - but only if you understand what's actually confusing them. The technology wasn't the hard part. Listening was.

Figma · Miro · Cursor

See the Project in Action

Short walkthrough of the master's thesis prototype and research-driven design decisions.